Technical Changes Have The Largest Impact On SEO Performance

Understanding Google ranking factors and how the value of “PageRank” disseminates within your domain is critical for eCommerce marketing executives to execute and maximize.

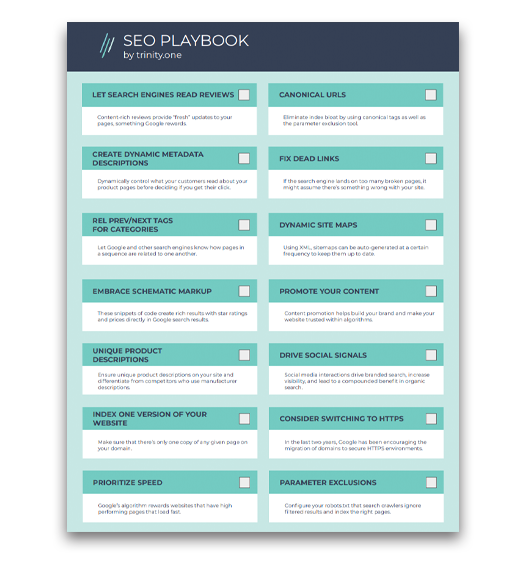

Getting SEO in eCommerce right means the proper configuration of tagging and technology within your merchandising pages. Simply put, if your architecture is presenting duplication, pagination, or semantic issues to Google, you are not operating at your absolute best in organic search.

This post discusses how to identify these issues and address them with proper technology practices. We have outlined in easy to understand components, to provide eCommerce professionals with clear SEO directives.

How Search Engines View Your Website

Search engines follow a methodology based on “discovery” and “indexing”. Using an application sometimes described as a robot or “bot” for short, search engines use these programs to discover websites and the pages/content for which they encapsulate.

In some ways, search engines are basically like file cabinets. They capture individual webpages, classify them through semantics and on-page aspects, and rank them based on hundreds of algorithmic factors that span within a website.

eCommerce websites present unique challenges. Unlike standard content sites (think of a site like CNN.com) in which an article or video may have only one core URL, eCommerce systems provide hundreds and sometimes thousands of permutations of single URL’s. This is due to filtering mechanisms and other faceted navigation built into eCommerce navigational systems.

These additional permutations create problems for search engines as they discover and categorize hundreds of duplicate pages from a product catalog. Valuable crawling resources may be wasted and that may leave some pages un-captured (i.e. left out of the file cabinet).

When building a website, designers, developers, and marketers alike must consider the three key components to building a search friendly eCommerce system:

- Duplication – How your site presents content optimally to search engines

- Pagination – How your site merchandises items through connected pages

- Classification – How your site maximizes semantic search (i.e. leverages Google hummingbird algorithm)

This post discuss our first core area of duplication and provides insights into minimizing pitfalls within your eCommerce SEO efforts.

Solving Page Duplication On eCommerce Websites

Page duplication generates bloat within search engines. Index bloat can reduce overall SEO value at primary catalog pages and impacts the rankings of categories and products.

Because most large retailers in the U.S. either leverage core search and navigation systems within their current platform or “bolt on” 3rd party hosted systems that enhance the user search experience, permutations of pages are created and numerous URL’s exist for a single page.

Fixing these issues using technology is a great lever to drive organic search efficiency. Lets get started…

Get Product Attributes Right

Within your current category and family presentation pages, think of the obvious filtering attributes and how these attributes affect both category and product page URL’s.

Price, brand, color, size, clearance are attributes that all retailers likely leverage within their eCommerce system. Progressive retailers, especially niche brands that operate within a unique segment, go beyond the standard attributes mentioned and construct category specific attributes that help users in finding the products that are right for them (ex. memory type for computers).

Clean Up URL Structure

All of these options are an absolute headache to search engine crawlers as they wander aimlessly around the permutations that are created, having to guess and make choices into which pages are the primary ones, to not only rank but also index.

Example:

www.yoursite.com/category=rugs

www.yoursite.com/category=rugs/&sort=low

In this simplistic example, the category page has rendered a new URL with the filtering of lowest price. This has not impacted the page in any material way and is adding bloat when both pages are crawled by engines.

Canonicalize Product Pages

Another common issue is when product URL’s get published in numerous locations. The same product page has a dynamic URL as a result of user session and/or search preference.

Example:

http://www.yoursite.com/products?category=rugs&color=red&id=123

http://www.yoursite.com/rugs/red/123.html

These URL’s lead to the same product, but yet are different URL’s, creating duplicates.

Another common issue is when URL’s publish to secure and non-secure URL’s concurrently:

Example:

Lastly, products can be published in numerous categorical locations within your site and create duplicate content. Here is a typical scenario.

Example:

http://www.yoursite.com/rugs/red/id=123

https://www.yoursite.com/home/red/id=345

In all of these examples, a retailer must classify one single page as the primary version for Google to mitigate any duplicate content risk. In these instances, your business must utilize one of two tactics to help Google with its indexing practice.

For most retailers, utilizing the canonical tag works very well. This tag allows search engines to understand which version of a page should be considered the primary version and be given the most consideration in the rankings. Canonical tags can be a bit tricky to implement, and without question you want to have a developer conduct the integration.

Be careful to avoid any landmines within the integration, including:

- Pointing your canonical tag to the first page of a paginated series (think page 1 out of a 8 page result set for the rug page above). In these cases, point your tag to the “view all page”.

- Using relative URL’s (the full WWW versions are needed)

- Self-facing canonical tags (tags point to the URL for which the snippet is integrated)

- Canonicalization of content does not match (using a canonical on an article pointing to a sub-category merchandise grid page)

- Having a canonical tag in the <body> portion of your HTML document (needs to always be in the <head> portion)

Block Potential Duplicated Pages In Robots.txt

By including canonical tags, you are assisting Google to crawl your site more efficiently and properly map your key pages in their index. We have seen instances in which retailers have gained 10-20% in incremental long tail traffic through the integration of this type of tag.

Another option is the use of your robots.txt file to block pages or directories from being crawled. This is a cumbersome solution that could cause numerous issues and is not ideal for thousands of pages or up.

A better alternative, if you are unable to use the canonical tag within your current environment, is to exclude pages at the HTML document level by using No Index Tags.

So you have gotten rid of the bloat, now whats next? In our next post about eCommerce SEO we will discuss the dynamics of pagination and why it is so critical to optimal eCommerce performance.